What is Kubernetes? A Guide to Container Orchestration

Containers reshaped efficient software development, allowing dev teams to isolate apps in special solo environments on multiple operating systems. As container tools like Docker become more popular, logical container management has become the next workflow challenge for DevOps teams.

That’s why more dev teams are turning to Kubernetes to manage their production-grade containers. Designed with the same principles that allow Google to run a network of billions of containers, Kubernetes presents a secure, scalable, and efficient solution for development teams.

We know you’re busy developing, but if you have 5 minutes, you can read this brief guide to Kubernetes and still have time to grab a snack. Sound good? Let’s jump in!

What Is Kubernetes?

Kubernetes, Greek for “helmsman of a ship,” is an open source system designed to manage containerized services. The Kubernetes project, an offshoot of Borg, was released by Google in 2014 with an aim to provide a flexible computing, networking, and storage infrastructure environment for developers. Instead of using code from their existing Borg system, Google designed the Kubernetes platform to orchestrate Docker containers.

Kubernetes is hosted by the Cloud Native Computing Foundation (CNCF), and blends the simplicity of Platform as a Service (PaaS) with the stability of Infrastructure as a Service (IaaS). It provides optional and pluggable building blocks for developers. This gives dev teams the ability to package application containers confidently, regardless of the different infrastructure providers users may have.

Why Kubernetes?

A full-package cloud application today is far more than just written code. From a persistent remote disk to back the application to load balancing and autoscaling group all within a container, a container system needs to be robust and flexible.

Kubernetes allows teams to run applications for all cloud infrastructures, whether it is public or a private cloud server running Windows or Linux. And while Kubernetes provides flexible functionality, it can also support application-specific workflows.

Being an open source tool also allows Kubernetes more flexibility, compared to Docker Swarm, which is open source but controlled by Docker, Inc.

The Kubernetes control plane is built on the same APIs that are available to developers and users. Users can write their own controllers and create schedulers with their own API, which can be targeted by a general purpose command-line tool.

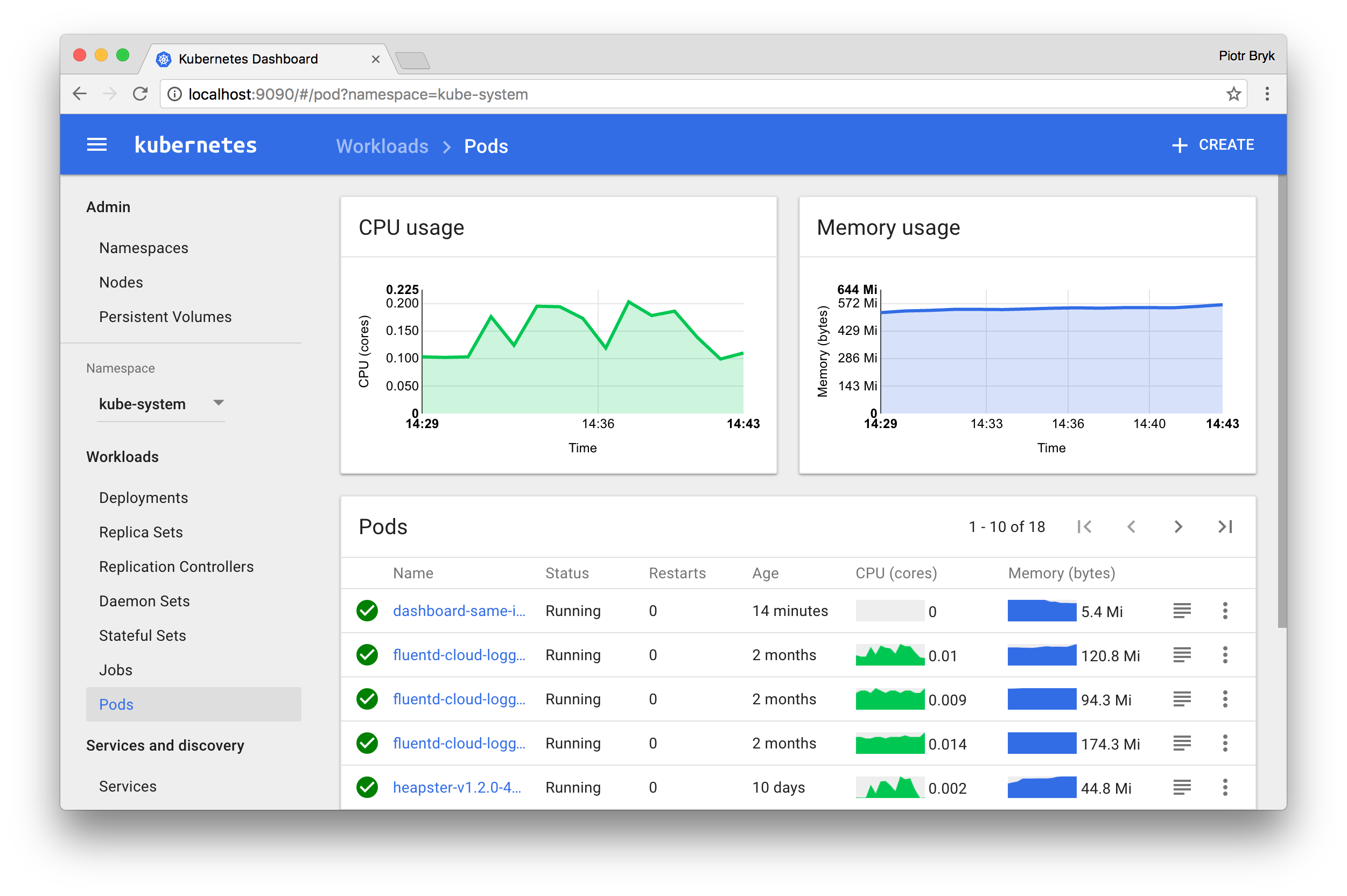

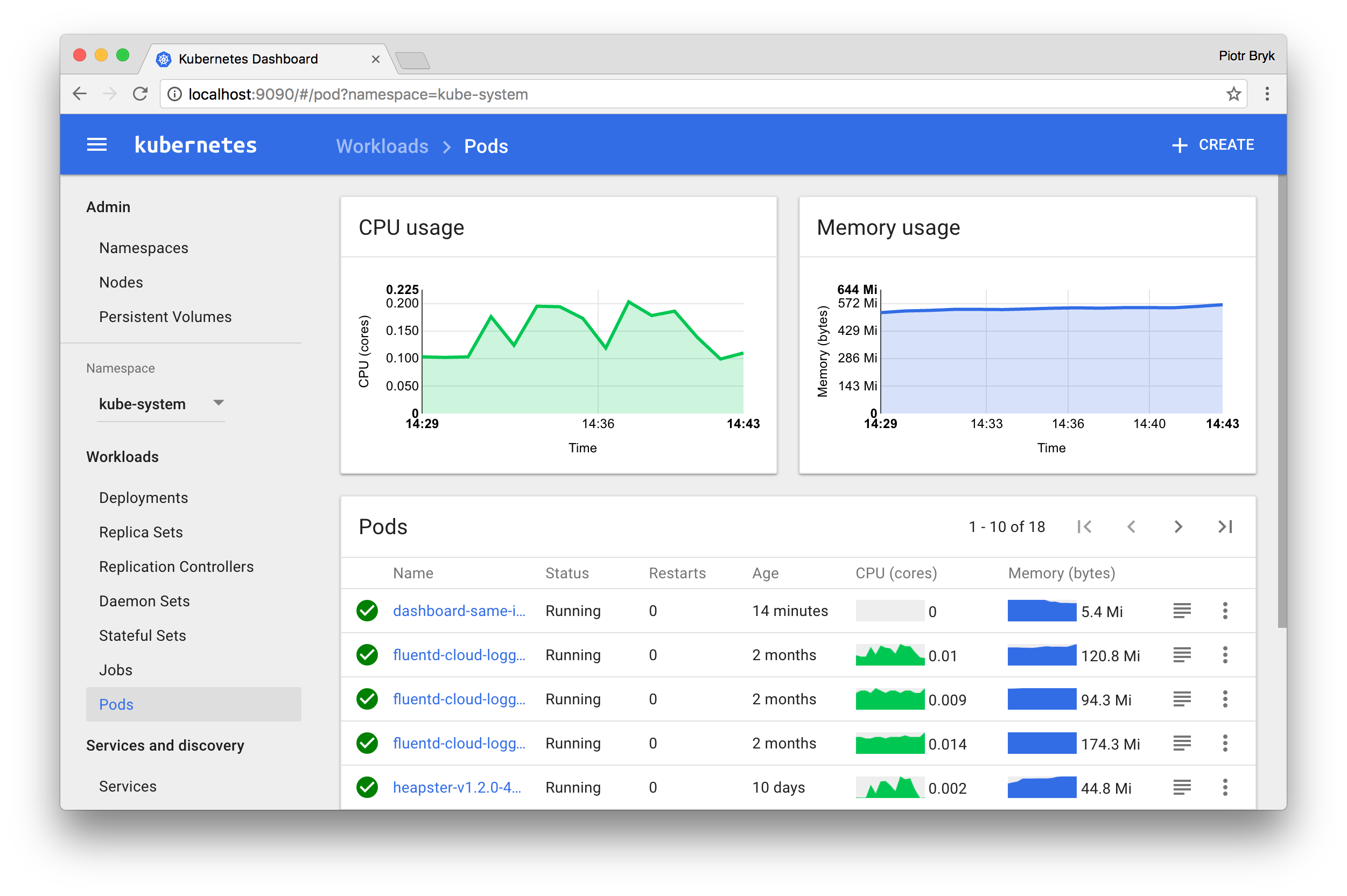

The Kubernetes UI dashboard is able show users resource utilization inside your cluster. Now teams have an overview of how clusters are performing and can implement scaling changes easier.

Teams can automate container restarts when they fail, and replace or reschedule containers when nodes die. Kubernetes can kill containers that don’t respond to your team’s user-defined health check.

Kubernetes best practices

Developers should first know whether they need to have a simple local Docker-based solution or a more robust hosted solution. Dev teams should factor their size, goals, and frequency of rollouts.

Kubeadm-dind is a multi-node Kubernetes cluster which requires a Docker daemon and uses Docker-in-Docker techniques to spawn clusters. This is perfect for smaller teams testing the efficacy of Kubernetes clusters. Hosted solutions for larger teams include Google’s own Kubernetes Engine, as well as Amazon Elastic Container Service for Kubernetes and Azure Container service.

When teams are configuring clusters, they should identify the latest stable API version. Configuration files should be stored in version control before being pushed to clusters. This ensures a quick rollback if further configuration if needed.

What are the Kubernetes trends?

Kubernetes offers a team and community of diverse open source developers, a growing ecosystem that supports rollouts and changes to application configuration. Development teams can connect to the Slack channel or join the Kubernetes-dev Google group which has weekly community meetings to discuss Kubernete trends.

Kubernetes Cluster Federation, first released back in July 2016, is an increasingly popular way to scale Kubernetes clusters. Federation allows teams to improve utilization and save team resources by syncing multiple clusters together. Automatically mount the storage system of your choice, whether on a cloud or network storage system.

Dev teams should also be on top of the etcd Operator. This is the primary datastore for Kubernetes, storing and replicating all cluster state. It’s under active development with planned features announced.

What’s the future of Kubernetes development?

Teams incorporating the latest Kubernetes trends have the advantage of scaling with great efficiency. Container technology is accelerating with its market expected to grow 40% per year, from just $762 million in 2016 to over $2.7 billion by 2020.

As more enterprise organizations adopt containers, container management service knowledge will be an important and crucial step for teams aiming to build mature DevOps processes, without depending on infrastructure providers.

There you have it! Everything you need to know about Kubernetes. Remember, check out the rest of Stackify’s blog for more resources on software development, DevOps, and developer tools.

With APM, server health metrics, and error log integration, improve your application performance with Stackify Retrace. Try your free two week trial today

Improve Your Code with Retrace APM

Stackify's APM tools are used by thousands of .NET, Java, PHP, Node.js, Python, & Ruby developers all over the world.

Explore Retrace's product features to learn more.

Learn More