What Are Software Metrics and How Can You Track Them?

By: Alexandra

| September 16, 2017

A software metric is a measure of software characteristics that are quantifiable or countable. Software metrics are important for many reasons, including measuring software performance, planning work items, measuring productivity, and many other uses.

Within the software development process, there are many metrics that are all related to each other. Software metrics are related to the four functions of management: Planning, Organization, Control, or Improvement.

In this article, we are going to discuss several topics including many examples of software metrics:

- Benefits of Software Metrics

- How Software Metrics Lack Clarity

- How to Track Software Metrics

- Examples of Software Metrics

Benefits of Software Metrics

The goal of tracking and analyzing software metrics is to determine the quality of the current product or process, improve that quality and predict the quality once the software development project is complete. On a more granular level, software development managers are trying to:

- Increase return on investment (ROI)

- Identify areas of improvement

- Manage workloads

- Reduce overtime

- Reduce costs

These goals can be achieved by providing information and clarity throughout the organization about complex software development projects. Metrics are an important component of quality assurance, management, debugging, performance, and estimating costs, and they’re valuable for both developers and development team leaders:

- Managers can use software metrics to identify, prioritize, track and communicate any issues to foster better team productivity. This enables effective management and allows assessment and prioritization of problems within software development projects. The sooner managers can detect software problems, the easier and less-expensive the troubleshooting process.

- Software development teams can use software metrics to communicate the status of software development projects, pinpoint and address issues, and monitor, improve on, and better manage their workflow.

Software metrics offer an assessment of the impact of decisions made during software development projects. This helps managers assess and prioritize objectives and performance goals.

How Software Metrics Lack Clarity

Terms used to describe software metrics often have multiple definitions and ways to count or measure characteristics. For example, lines of code (LOC) is a common measure of software development. But there are two ways to count each line of code:

- One is to count each physical line that ends with a return. But some software developers don’t accept this count because it may include lines of “dead code” or comments.

- To get around those shortfalls and others, each logical statement could be considered a line of code.

Thus, a single software package could have two very different LOC counts depending on which counting method is used. That makes it difficult to compare software simply by lines of code or any other metric without a standard definition, which is why establishing a measurement method and consistent units of measurement to be used throughout the life of the project is crucial.

There is also an issue with how software metrics are used. If an organization uses productivity metrics that emphasize volume of code and errors, software developers could avoid tackling tricky problems to keep their LOC up and error counts down. Software developers who write a large amount of simple code may have great productivity numbers but not great software development skills. Additionally, software metrics shouldn’t be monitored simply because they’re easy to obtain and display – only metrics that add value to the project and process should be tracked.

How to Track Software Metrics

Software metrics are great for management teams because they offer a quick way to track software development, set goals and measure performance. But oversimplifying software development can distract software developers from goals such as delivering useful software and increasing customer satisfaction.

Of course, none of this matters if the measurements that are used in software metrics are not collected or the data is not analyzed. The first problem is that software development teams may consider it more important to actually do the work than to measure it.

It becomes imperative to make measurement easy to collect or it will not be done. Make the software metrics work for the software development team so that it can work better. Measuring and analyzing doesn’t have to be burdensome or something that gets in the way of creating code. Software metrics should have several important characteristics. They should be:

- Simple and computable

- Consistent and unambiguous (objective)

- Use consistent units of measurement

- Independent of programming languages

- Easy to calibrate and adaptable

- Easy and cost-effective to obtain

- Able to be validated for accuracy and reliability

- Relevant to the development of high-quality software products

This is why software development platforms that automatically measure and track metrics are important. But software development teams and management run the risk of having too much data and not enough emphasis on the software metrics that help deliver useful software to customers.

The technical question of how software metrics are collected, calculated and reported are not as important as deciding how to use software metrics. Patrick Kua outlines four guidelines for an appropriate use of software metrics:

1. Link software metrics to goals.

Often sets of software metrics are communicated to software development teams as goals. So the focus becomes:

- Reducing the lines of codes

- Reducing the number of bugs reported

- Increasing the number of software iterations

- Speeding up the completion of tasks

Focusing on those metrics as targets help software developers reach more important goals such as improving software usefulness and user experience.

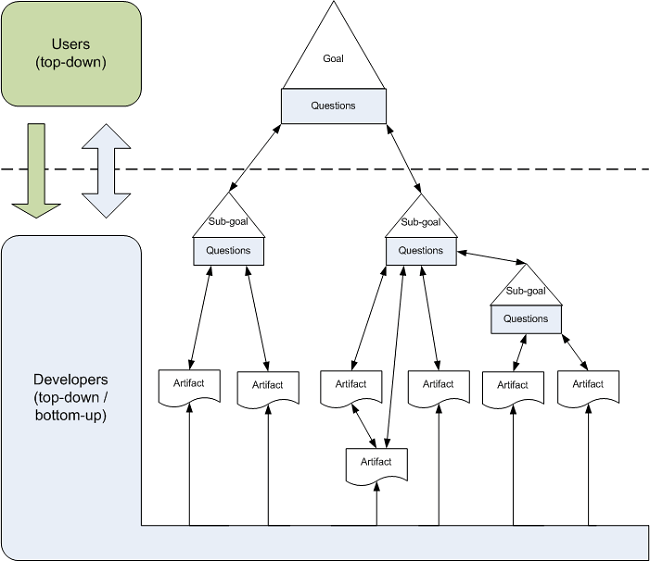

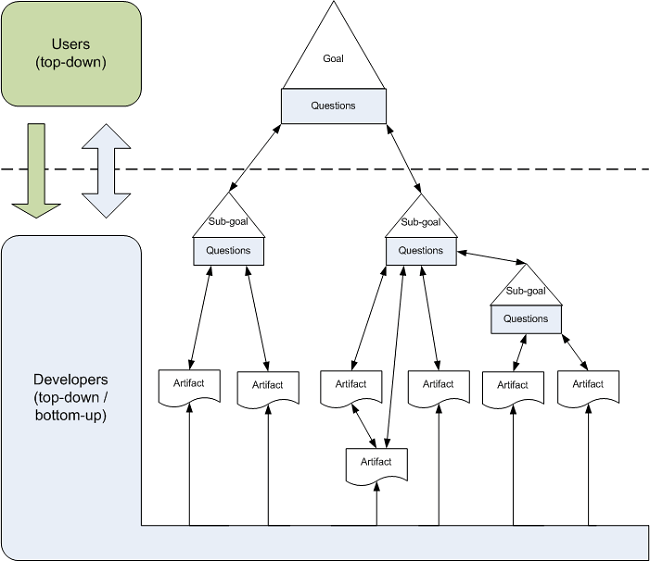

Image via Wikipedia

For example, size-based software metrics often measure lines of code to indicate coding complexity or software efficiency. In an effort to reduce the code’s complexity, management may place restrictions on how many lines of code are to written to complete functions. In an effort to simplify functions, software developers could write more functions that have fewer lines of code to reach their target but do not reduce overall code complexity or improve software efficiency.

When developing goals, management needs to involve the software development teams in establishing goals, choosing software metrics that measure progress toward those goals and align metrics with those goals.

2. Track trends, not numbers.

Software metrics are very seductive to management because complex processes are represented as simple numbers. And those numbers are easy to compare to other numbers. So when a software metric target is met, it is easy to declare success. Not reaching that number lets software development teams know they need to work more on reaching that target.

These simple targets do not offer as much information on how the software metrics are trending. Any single data point is not as significant as the trend it is part of. Analysis of why the trend line is moving in a certain direction or at what rate it is moving will say more about the process. Trends also will show what effect any process changes have on progress.

The psychological effects of observing a trend – similar to the Hawthorne Effect, or changes in behavior resulting from awareness of being observed – can be greater than focusing on a single measurement. If the target is not met, that, unfortunately, can be seen as a failure. But a trend line showing progress toward a target offers incentive and insight into how to reach that target.

3. Set shorter measurement periods.

Software development teams want to spend their time getting the work done not checking if they are reaching management established targets. So a hands-off approach might be to set the target sometime in the future and not bother the software team until it is time to tell them they succeeded or failed to reach the target.

By breaking the measurement periods into smaller time frames, the software development team can check the software metrics — and the trend line — to determine how well they are progressing.

Yes, that is an interruption, but giving software development teams more time to analyze their progress and change tactics when something is not working is very productive. The shorter periods of measurement offer more data points that can be useful in reaching goals, not just software metric targets.

4. Stop using software metrics that do not lead to change.

We all know that the process of repeating actions without change with the expectation of different results is the definition of insanity. But repeating the same work without adjustments that do not achieve goals is the definition of managing by metrics.

Why would software developers keep doing something that is not getting them closer to goals such as better software experiences? Because they are focusing on software metrics that do not measure progress toward that goal.

Some software metrics have no value when it comes to indicating software quality or team workflow. Management and software development teams need to work on software metrics that drive progress towards goals and provide verifiable, consistent indicators of progress.

[adinserter block=”33″]

Try Stackify’s free code profiler, Prefix, to write better code on your workstation. Prefix works with .NET, Java, PHP, Node.js, Ruby, and Python.

Examples of Software Metrics

There is no standard or definition of software metrics that have value to software development teams. And software metrics have different value to different teams. It depends on what are the goals for the software development teams.

As a starting point, here are some software metrics that can help developers track their progress.

Agile process metrics

Agile process metrics focus on how agile teams make decisions and plan. These metrics do not describe the software, but they can be used to improve the software development process.

Lead time

Lead time quantifies how long it takes for ideas to be developed and delivered as software. Lowering lead time is a way to improve how responsive software developers are to customers.

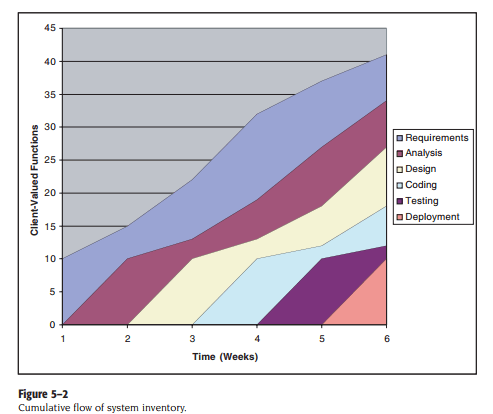

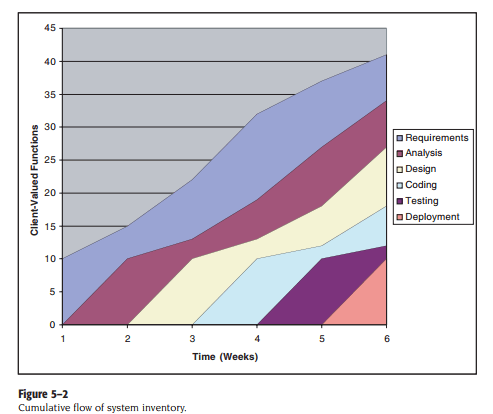

Screenshot via Pearsoned.co.uk

Cycle time

Cycle time describes how long it takes to change the software system and implement that change in production.

Team velocity

Team velocity measures how many software units a team completes in an iteration or sprint. This is an internal metric that should not be used to compare software development teams. The definition of deliverables changes for individual software development teams over time and the definitions are different for different teams.

Open/close rates

Open/close rates are calculated by tracking production issues reported in a specific time period. It is important to pay attention to how this software metric trends.

Production

Production metrics attempt to measure how much work is done and determine the efficiency of software development teams. The software metrics that use speed as a factor are important to managers who want software delivered as fast as possible.

Active days

Active days is a measure of how much time a software developer contributes code to the software development project. This does not include planning and administrative tasks. The purpose of this software metric is to assess the hidden costs of interruptions.

Assignment scope

Assignment scope is the amount of code that a programmer can maintain and support in a year. This software metric can be used to plan how many people are needed to support a software system and compare teams.

Efficiency

Efficiency attempts to measure the amount of productive code contributed by a software developer. The amount of churn shows the lack of productive code. Thus a software developer with a low churn could have highly efficient code.

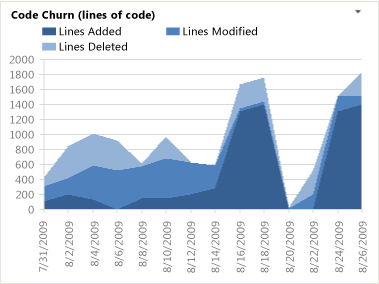

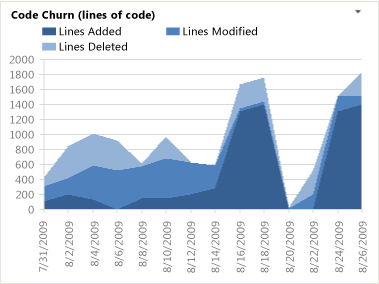

Code churn

Code churn represents the number of lines of code that were modified, added or deleted in a specified period of time. If code churn increases, then it could be a sign that the software development project needs attention.

Example Code Churn report, screenshot via Visual Studio

Impact

Impact measures the effect of any code change on the software development project. A code change that affects multiple files could have more impact than a code change affecting a single file.

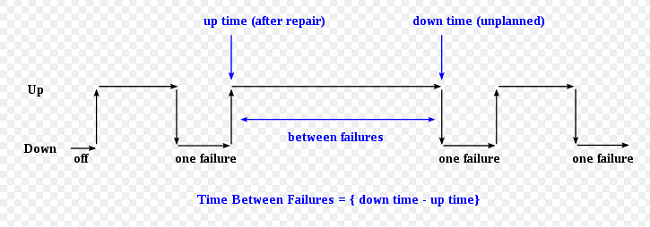

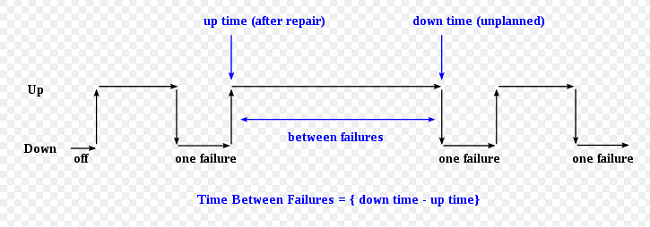

Mean time between failures (MTBF) and mean time to recover/repair (MTTR)

Both metrics measure how the software performs in the production environment. Since software failures are almost unavoidable, these software metrics attempt to quantify how well the software recovers and preserves data.

Image via Wikimedia Commons

Application crash rate (ACR)

Application crash rate is calculated by dividing how many times an application fails (F) by how many times it is used (U).

ACR = F/U

Security metrics

Security metrics reflect a measure of software quality. These metrics need to be tracked over time to show how software development teams are developing security responses.

Endpoint incidents

Endpoint incidents are how many devices have been infected by a virus in a given period of time.

Mean time to repair (MTTR)

Mean time to repair in this context measures the time from the security breach discovery to when a working remedy is deployed.

Size-oriented metrics

Size-oriented metrics focus on the size of the software and are usually expressed as kilo lines of code (KLOC). It is a fairly easy software metric to collect once decisions are made about what constitutes a line of code. Unfortunately, it is not useful for comparing software projects written in different languages. Some examples include:

- Errors per KLOC

- Defects per KLOC

- Cost per KLOC

Function-oriented metrics

Function-oriented metrics focus on how much functionality software offers. But functionality cannot be measured directly. So function-oriented software metrics rely on calculating the function point (FP) — a unit of measurement that quantifies the business functionality provided by the product. Function points are also useful for comparing software projects written in different languages.

Function points are not an easy concept to master and methods vary. This is why many software development managers and teams skip function points altogether. They do not perceive function points as worth the time.

Errors per FP or Defects per FP

These software metrics are used as indicators of an information system’s quality. Software development teams can use these software metrics to reduce miscommunications and introduce new control measures.

Defect Removal Efficiency (DRE)

The Defect Removal Efficiency is used to quantify how many defects were found by the end user after product delivery (D) in relation to the errors found before product delivery (E). The formula is:

DRE = E / (E+D)

The closer to 1 DRE is, the fewer defects found after product delivery.

With dozens of potential software metrics to track, it’s crucial for development teams to evaluate their needs and select metrics that are aligned with business goals, relevant to the project, and represent valid measures of progress. Monitoring the right metrics (as opposed to not monitoring metrics at all or monitoring metrics that don’t really matter) can mean the difference between a highly efficient, productive team and a floundering one. The same is true of software testing: using the right tests to evaluate the right features and functions is the key to success. (Check out our guide on software testing to learn more about the various testing types.)

While the process of defining goals, selecting metrics, and implementing consistent measurement methods can be time-consuming, the productivity gains and time saved over the life of a project make it time well invested. Various software metrics are incorporated into solutions such as application performance management (APM) tools, along with data and insights on application usage, code performance, slow requests, and much more. Retrace, Stackify’s APM solution, combines APM, logs, errors, monitoring, and metrics in one, providing a fully-integrated, multi-environment application performance solution to level-up your development work. Check out Stackify’s interview with John Sumser with HR Examiner, and one of Forbes Magazine’s 20 to Watch in Big Data, for more insights on DevOps and Big Data.

Additional Resources and Tutorials

Because there is little standardization in the field of software metrics, there are many opinions and options to learn more.

Improve Your Code with Retrace APM

Stackify's APM tools are used by thousands of .NET, Java, PHP, Node.js, Python, & Ruby developers all over the world.

Explore Retrace's product features to learn more.

Learn More